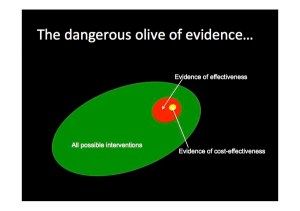

The Dangerous Olive of Evidence. Yes, that does sound a bit weird, but please stick with me for the explanation. Close your eyes and just imagine an Olive (don’t worry, I’m not trying to trick you into a Mindfulness experiment).

Imagine a nice plump juicy green olive; Spanish, Greek, Italian, take your pick. Enjoy the taste of that delicious olive flesh, until your teeth inevitably crunch upon the little wooden pip. Perfect, unless you buy the de-pipped type, but I’m sure you get my point.

Well that was delicious, but what’s an olive got to do with evidence? First off, you have imagine the whole olive is all of the evidence that is potentially available about a particular topic or subject. Secondly you have to think of the small wooden pip as the amount of evidence that people actually use to make decisions or policy.

The ‘dangerous olive of evidence’ theory works on the basis that only a very small proportion of what could be used, is actually used. If you subscribe to that view that, a good decision is based upon using as much information as possible (within reasonable boundaries of time & cost), ignoring so much potentially useful information isn’t good practice, look at what you are missing.

This idea was put across by Dr Fiona Spotswood last week at #behfest16, and it immediately struck a chord. Not just with me, but with quite a few people. In fact, the Future Generations Commissioner for Wales also picked up on it. The idea is explained further in this article ‘Olives and Elephants: the evidence problem in behaviour change’, where Dr Harry Rutter from Public Health England is credited with introducing the phrase.

For me, there are some key points relevant to #behfest16 in Dr Spotswood’s paper:

- Is all evidence equal? Is there a preference for quantitative ‘hard’ data over everything else?

- What to collect? Does this lead to a bias towards the evidence we ‘can’ collect, rather than evidence we ‘need’ to, or ‘should’ collect?

- Over Simplification. Does this miss out on the complexities of real life and try to over simplify things that aren’t actually straightforward?

There’s a huge amount to think about here, I have written about something similar previously in Expert Bias and The Ladder of Inference. It needs a lot more thinking and debate, hopefully at #behfest16, which I can report on in a future post.

Behaviour Change Techniques and Theory Taxonomy. Another part of Dr Spotswood’s paper links to work that has been done to try to bring together the large number of behaviour change techniques that exist (the whole olive) into something with some order and structure (a taxonomy if you are feeling scientific).

I highly recommend having a look at the Behaviour Change Techniques and Theory website, hosted by UCL, to get an idea of the work carried out by a number of Universities.

One stand out statistic can be found in the Annals of Behavioural Medicine, Volume 46, August 2013, link here. In summary, the paper reports that there are 93 distinct Behaviour Change Techniques (BCTs). Each one of the 93 BCTs was proven to work and had evidence to support this, which is why it had found itself on the list of 93.

Are We Wasting Time Chasing Innovation? The fact there are 93 proven BCTs that already exist in the world, does raise an interesting question. Shouldn’t we be trying some these before we go looking for something new? Is ‘innovation’ something you try once you’ve exhausted the existing possibilities?

This is an interesting question I’ve heard raised several times at #behfest16, frequently by Dr Carl Hughes, the Director or the Wales Centre for Behaviour Change. As with the ‘dangerous olive’ question, this is something else that could do with a bit more discussion across the community.

So, What’s the PONT?

- The amount and type of evidence people use to make decisions (or policy) can depend upon may variables. Overall it is probably better to have a lot of evidence from a wide range of sources, rather that a small amount from a very narrow field.

- Choosing what us useful evidence is difficult, but it shouldn’t be driven by what ‘can’ be collected in favour of what ‘should’ be collected.

- There are a lot of BCTs in existence (93) that have evidence to prove they work. Should we be looking at these before chasing ‘new’ and ‘innovative’ alternatives?

This was post from week 2 the Festival of Behaviour Change at Bangor University, programme link here.

Leave a comment